Best Practices for Sports Data Annotation Projects

Learn the essential best practices for planning and executing successful sports data annotation projects at scale.

So, you want to run a sports data annotation project? Welcome to the thunderdome. It sounds simple: "Just draw a box around the ball." But then you realize the ball is hidden behind a player, or it’s a blur, or—in the case of one memorable cricket project—a bird flew across the screen and the AI thought it was the ball. (True story).

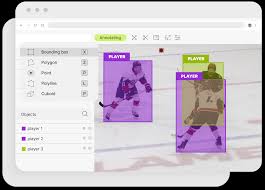

Best Practice #1: Define your ontology clearly. If you tell annotators to "label the player," does that include the helmet? The bat? The shadow? If you don't specify, half your team will include the shadow and the other half won't, and your model will have an existential crisis. We spend days just writing the "Guidelines" document before a single pixel is labeled.

Best Practice #2: QA is not an afterthought; it’s the main event. You can't just check 1% of the work. In sports, a 1% error rate means missing 100 plays a season. We use a multi-tier QA system where senior annotators review the work of juniors, and automated scripts check for logical impossibilities (like a player having three legs). Trust, but verify. Then verify again.

Best Practice #3: Treat your annotators like athletes. This is mental endurance work. Staring at slow-motion footage for 8 hours requires focus. We encourage breaks, gamify the process, and ensure our team understands the sport they are watching. You can't annotate a pick-and-roll if you don't know what a pick-and-roll is. Context is king.